The Challenge

Most task apps use corporate grays or high-contrast primaries. Neither works for ADHD users.

Gray feels lifeless. Bright colors overstimulate.

I needed something in between: calm but not boring, colorful but not overwhelming.

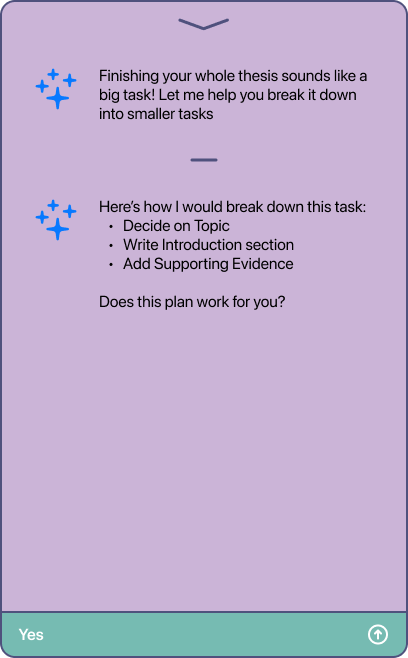

The Palette:

Primary:

- Mist Lavender

#E9D9EB (background) - Heather Violet

#CBB4D7 (cards, surfaces) - Royal Purple

#7D60A4 (buttons, accents)

Accent:

- Teal

#3C9E91 (input bar, section headers)

Text:

- Charcoal Plum

#2A1E3E (primary text) - Slate Violet Gray

#4F517D (secondary text)

Accent:

- Soft Green

#8CD790 (success states)

Why These Colors:

Purple: Associated with calm and creativity. Not the aggressive red/orange often used for productivity. Feels supportive, not demanding.

Teal: Provides energy and focus without being harsh. Works as an accent without competing with the purple base.

Soft, desaturated tones: ADHD users can be sensitive to overstimulation. Muted colors reduce visual fatigue while maintaining enough contrast for clarity.

WCAG Compliance: Every color pairing was tested. All text meets AA standards (4.5:1 minimum contrast ratio).